This blog is all about emerging future technologies which might seem unreal but these technologies is going to shape our future.

The future of robotics?: Humanoid machines with the ability to express genuine emotions.

They’re already here – driving cars, vacuuming carpets and feeding hospital patients. They may not be walking, talking, human-like sentient beings, but they sure are clever… and a little creepy.

The idea that machines could perform to such standards is startling. Driving is a complex task that takes humans a long time to perfect. Yet here were a bunch of jumped-up laptops controlling cars like veteran chauffeurs.

Even more striking was the fact that the collision between the robot Land Rover, built by researchers at the Massachusetts Institute of Technology, and the Tahoe, fitted out by Cornell University AI experts, was the only scrape in the entire competition. Yet only three years earlier, at DARPA’s first driverless car race, every robot competitor – directed to navigate across a stretch of open desert – either crashed or seized up before getting near the finishing line. At the following year’s race, six robot cars completed the course.

So DARPA, which is responsible for developing new technology for the U.S. military, moved the goalposts from the desert to the city for its next, far more exacting, competition in 2007: the urban challenge. A total of 83 robot cars, fitted with complex video- and radar-guidance systems, competed for the US$2 million (A$2.2 million) prize.

Each had its on-board computer loaded with a digital map and route plans, and was instructed to negotiate busy roads (some cars were driven by human volunteers, others by robots); differentiate between pedestrians and stationary objects; determine whether other vehicles were parked or moving off; and various parking manoeuvres (which robots turn out to be unexpectedly adept at).

Six of the 83 cars completed the 100 km course. So in three years, robot cars that could not even cross flat empty land progressed to being able to handle the horrors of city traffic.

It is a remarkable transition that has clear implications for the car of the future. More importantly, it demonstrates how robotics sciences and AI have progressed in the past few years – a point stressed by Bill Gates, the Microsoft boss who is almost a Messianic convert to these causes.

“The robotics industry is developing in much the same way the computer business did 30 years ago,” he argues. As he points out, robotic arms help perform simple surgery today; domestic robots vacuum floors; while electronics companies make toys that mimic pets and children with increasing sophistication.

“I can envision a future in which robotic devices will become a nearly ubiquitous part of our day-to-day lives,” says Gates. “We may be on the verge of a new era, when the PC will get up off the desktop and allow us to see, hear, touch and manipulate objects in places where we are not physically present.”

Intelligent machines are already making a considerable impact in everyday life. One job in 10 in the car industry is done by a robot, while in Iraq, U.S. forces employ 4,000 robots to help defuse bombs, fly drones over enemy lines and carry out other dangerous work. U.S. military chiefs have now decreed that a third of ground vehicles will be driverless by 2015.

The worlds of writers such as American sci-fi luminary Isaac Asimov, who envisaged robots as clever, compassionate companions, may soon be upon us. Not everyone relishes the prospect. Machines could just as easily turn against their creators, as seen in movies such as The Matrix, Westworld or The Stepford Wives, in which humans are enslaved, killed or replaced by robots.

But are we really likely to be hunted down by The Terminator robots or pursued by androids like those in Ridley Scott’s Blade Runner? Or will our lives be easier thanks to servile Star Wars droids like C-3P0 and R2- D2? Is the robot envisaged in science fiction realistic at all?

Today’s robot cars look impressive but they fall a long way short of the intelligence of HAL, the brilliant but malfunctioning supercomputer in Arthur C. Clarke’s 2001: A Space Odyssey. What is the potential for robots and computers in the near future?

“The fact is we still have a way to go before real robots catch up with their science fiction counterparts,” Gates says.

So what are the stumbling blocks? One key difficulty is getting robots to know their place. This has nothing to do with class or etiquette, but concerns the simple issue of positioning. Humans orient themselves with other objects in a room very easily. Robots find the task almost impossible. “Even something as simple as telling the difference between an open door and a window can be tricky for a robot,” says Gates. This has, until recently, reduced robots to fairly static and cumbersome roles.

For a long time, researchers tried to get round the problem by attempting to recreate the visual processing that goes on in the human cortex. However, that challenge has proved to be singularly exacting and complex. So scientists have turned to simpler alternatives; the new generations of sensors that can identify smells, chemicals and acids; that measure temperature; and – most importantly – that use infrared beams and laser rangers to pinpoint objects. These are now providing robots with sensing arrays that can give them far clearer ideas of their orientation and position.

“We have become far more pragmatic in our work,” says Nello Cristianini, professor of artificial intelligence at the University of Bristol in England and associate editor of the Journal of Artificial Intelligence Research. “We are no longer trying to recreate human functions. Instead we are looking for simpler solutions, with basic electronic sensors, for example.”

This approach is exemplified by vacuuming robots such as the Electrolux Trilobite. The Trilobite scuttles around homes pinging out ultrasound signals to create maps of rooms, which are remembered for future cleaning. Technology like this is now changing the face of robotics, says philosopher Ron Chrisley, director of the Centre for Research in Cognitive Science at the University of Sussex in England. “Sensors allow us to take the bottom-up approach to making robots.

Artificial intelligence experts spent a long time trying to build machines like a robot butler that would understand its owner’s needs or a robot that had a complex visual cortex. Now we have found that a more fruitful path is to build simple domestic machines, like robo-vacuum cleaners. Then we can work up from there.

“Robots first learn basic competence – how to move around a house without bumping into things. Then we can think about teaching them how to interact with humans,” he said.

Last year, a new Hong Kong restaurant, Robot Kitchen, opened with a couple of sensor-laden humanoid machines directing customers to their seats. Each possesses a touch-screen on which orders can be keyed in. The robot then returns with the correct dishes. In Japan, University of Tokyo researchers recently unveiled a kitchen ‘android’ that could wash dishes, pour tea and make a few limited meals.

The ultimate aim is to provide robot home helpers for the sick and the elderly, a key concern in a country like Japan where 22 per cent of the population is 65 or older. Over US$1 billion year is spent on research into robots that will be able to care for the elderly.

One of the first fruits of endeavours such as these is the My Spoon robot created by Secom, a small and innovative Japanese security company. My Spoon can feed disabled or incapacitated people by breaking up food and spooning it into their mouths. Then there’s Paro, a Japanese autonomous robot that looks like a baby harp seal, expresses feelings, moves its head and legs and responds to elderly patients who give it a cuddle, while surreptitiously monitoring their heart rate and other parameters for symptoms of stroke and heart attack.

Machines such as these take researchers into the field of socialised robotics: how to make robots act in a way that does not scare or offend individuals. “We need to study how robots should approach people, how they should appear. That is going to be a key area for future research,” adds Chrisley.

The development of carer robots also suggests intelligent machines will soon have other advanced roles in hospitals and surgeries. Some will simply aid doctors by pinpointing tumours or lesions during operations, for example. Others will function in far subtler, more sophisticated ways. Dmitry Oleynikov, at the University of Nebraska Medical Centre in Omaha, USA, is developing miniature robots that will be inserted inside abdominal cavities to assist in surgery. At the same time, researchers at Carnegie Mellon University in Pittsburgh, Pennsylvania, USA, are working on the HeartLander, a ‘caterpillar’ robot that enters the chest through an incision and then moves about to administer treatments.

Others envisage a future in which ’snake-arm robots’ will weave their way around the human body, equipped with lights, high-frequency cutters and sealers to administer treatment when required. “These are long, slender robot arms that don’t have elbows and can therefore ‘nose-follow’, like a snake, into confined spaces – for example, in the human body,” says Rob Buckingham, managing director of OC Robotics in Bristol, England, a company that is developing this technology.

Then there is the issue of computing power. A megahertz of computing power cost US$7,000 in 1970. Today it can be purchased for a few cents. Similarly, the price of data storage has plummeted, providing AI researchers with the processing power they need to tackle other problem areas – a key example being mobility.

At present a robot’s computer brain has to read data from each of its sensors, one after another, before it can work out its exact position. It is laborious and seriously limits speed. Increased computing power and development of multiple processors will allow these readings to be analysed simultaneously – while also pushing AI experts to design software that can deal with multiple data inputs.

This field is driven by the need to build complex automated robots that can work in hostile environments at speed: nuclear reactor repair vehicles, submersibles and rover vehicles for European and U.S. missions to Mars. Consider the current generation of Mars rovers. They move at a snail’s pace because they can only check, with painful slowness, the input from each of their sensors.

Future craft, such as Europe’s ExoMars project, will be designed to move much more quickly across the planet’s surface in their search for life, and will require considerable use of multiple processing computing. Similarly, missions to the moons of Jupiter, such as Europa – where ice-covered seas may harbour underwater life forms – will require spacecraft to carry robot submersibles capable of even more rapid data processing.

Devices like these should be in operation in a decade or so. By then, robots will also be helping to care for the elderly, playing important roles in surgical operations, controlling our cars and replacing soldiers in the battlefield. This still leaves us some way short of the intelligent machines envisaged by Clarke or Asimov, however – a point acknowledged by Ronald Arkin, a robotics expert who is director of the Mobile Robot Laboratory at Georgia Institute of Technology in Atlanta, USA.

“It is almost impossible to predict when machines will become as clever as humans,” he says. “That will depend on breakthroughs that still have to take place, and given that research in AI progresses in fits and starts, it is impossible to predict timescales accurately.”

According to Arkin, two major areas of research need to be addressed before computers acquire the intelligence of humans: vision and the processing of information as it flows through the human cortex. “We will not create the artificial equivalent of human intelligence until we understand these issues,” he says.

“That requires breakthroughs in brain research that have yet to be made, although work in magnetic resonance imaging holds great promise,” he adds. “Researchers can now watch areas of the brain light up as individuals carry out specific mental tasks. When we have that knowledge, we can pass it on to computers.”

Most researchers say it will take several decades to achieve that goal. However futurologist and computer expert Ray Kurzweil believes artificial intelligence could be on par with human intellect by the 2020s. After that, machine intelligence would outstrip that of the human brain as computers and robots learn to communicate, teach and replicate among themselves. “Once non-biological intelligence matches the range and subtlety of human intelligence, it will soar past it because of the acceleration of information-based technologies and the ability of machines to instantly share their knowledge,” Kurzweil says. In short, humanity will become just the means to a technological end.

The prognosis for humanity in such a world would not seem to be too healthy. “It certainly suggests there is a good case for ensuring these machines are programmed to the right ethical standards,” adds Arkin.

Most researchers believe such a prognosis is remote, particularly given current rates of research and development. On the other hand, we should not despair of machines showing any intelligence at all in the near future.

“Look at Google or Amazon,” says Cristianini. “Both display the attributes of intelligence. For example, Amazon is designed to recommend books to users based on the preferences of other people and to suggest works about which its creators could have known nothing. That is intelligence. So, in a sense, AI is with us already.”

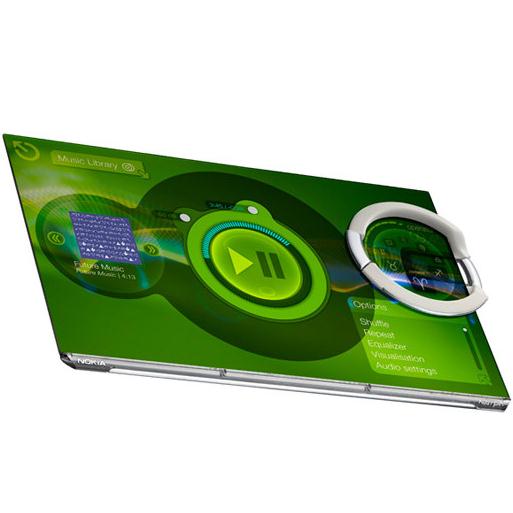

Morph is a concept that demonstrates how future mobile devices might be stretchable and flexible, allowing the user to transform their mobile device into radically different shapes. It demonstrates the ultimate

functionality that nanotechnology might be capable of delivering: flexible materials, transparent electronics and self-cleaning surfaces.

Dr. Bob Iannucci, Chief Technology Officer, Nokia, commented: “Nokia Research Center is looking at ways to reinvent the form and function of mobile devices; the Morph concept shows what might be possible”.

Dr. Tapani Ryhanen, Head of the NRC Cambridge UK laboratory, Nokia, commented: “We hope that this combination of art and science will showcase the potential of nanoscience to a wider audience. The research we are carrying out is fundamental to this as we seek a safe and controlled way to develop and use new materials.”

Professor Mark Welland, Head of the Department of Engineering’s Nanoscience Group at the University of Cambridge and University Director of Nokia-Cambridge collaboration added: “Developing the Morph concept with Nokia has provided us with a focus that is both artistically inspirational but, more importantly, sets the technology agenda for our joint nanoscience research that will stimulate our future work together.”

The partnership between Nokia and the University of Cambridge was announced in March, 2007 – an agreement to work together on an extensive and long term programme of joint research projects. NRC has established a research facility at the University’s West Cambridge site and collaborates with several departments – initially the Nanoscience Center and Electrical Division of the Engineering Department – on projects that, to begin with, are centered on nanotechnology.

Elements of Morph might be available to integrate into handheld devices within 7 years, though initially only at the high-end. However, nanotechnology may one day lead to low cost manufacturing solutions, and offers the possibility of integrating complex functionality at a low price.

In order to combat a potential nuclear attack, the United States has been developing a space-based missile defense system for the past two decades. This defense system began under former U.S. President Ronald Reagan. His Strategic Defense Initiative (SDI) called for the development of laser weaponry that would orbit the earth to shoot down ballistic missiles. There is now even talk of the United States developing a fifth military branch, perhaps called Space Force, that would pick up where the Air Force leaves off.

More details:http://science.howstuffworks.com/space-war.htm

This might be the perfect watch for the sportsperson or those who are style conscious. The outlines of the device are meant to be fluorescent, with the interior sporting a transparent surface so you can show off your fashion-forward sensibility.

This mind controlled headset called the EPOC is an human computer interface. Using this device you can instruct your computer to perform tasks as you think of it. At first, you have to train the software for the device by thinking of different commands or kinds of action and movements you might want to carry out in a video game.

This will map your brain activity using medical technology. You can then play your game by just thinking - in a handsfree way.

That may be a little aggressive, but Princeton University engineers have developed a device that may change the way that we power many of our smaller gadgets and devices. By using out natural body movement, they have created a small chip that will actually capture and harness that natural energy to create enough energy to power up things such as a cell phone, pacemaker and many other small devices that are electronic.

The chip is actually a combination of rubber and ceramic nanoribbons. When the chip is flexed, it generates electrical energy. How will this be put to use? Think of rubber soled shoes that have this chip embedded into them and every time a step is taken, energy is created and stored. Just the normal walking around inside the office during a normal work day would be enough to keep that cell phone powered every day.

Humans aren't the swiftest creatures on Earth, and most of us are limited in the amount of weight that we can pick up and carry. These weaknesses can be fatal on the battlefield, and that's why the U.S. Defense Advanced Research Projects Agency (DARPA) is investing $50 million to develop an exoskeleton suit for ground troops. This wearable robotic system could give soldiers the ability to run faster, carry heavier weapons and leap over large obstacles.

Photo courtesy DARPA Exoskeletons will give soldiers the ability to move faster while carrying more weight. |

Basically, an exoskeleton is a wearable machine that gives a human enhanced abilities. Imagine a battalion of super soldiers that can lift hundreds of pounds as easily as lifting 10 pounds and can run twice their normal speed. The potential of non-military applications is also phenomenal. In 2000, DARPA requested proposals for human performance augmentation systems, and will soon be signing contracts to begin developing exoskeletons. The military agency said that the testing of this new technology is at least a decade away. It will be much longer before soldiers are donning these body amplification systems for battle.

These exoskeletal systems are expected to give soldiers amplified strength and speed, and will also have built-in computers to aid soldiers in navigating foreign territories. Questions still remain about how these machines will be powered and how they will respond to human motion.